Best Of

Re: The Bi-Monthly Environment Art Challenge | September - October (80)

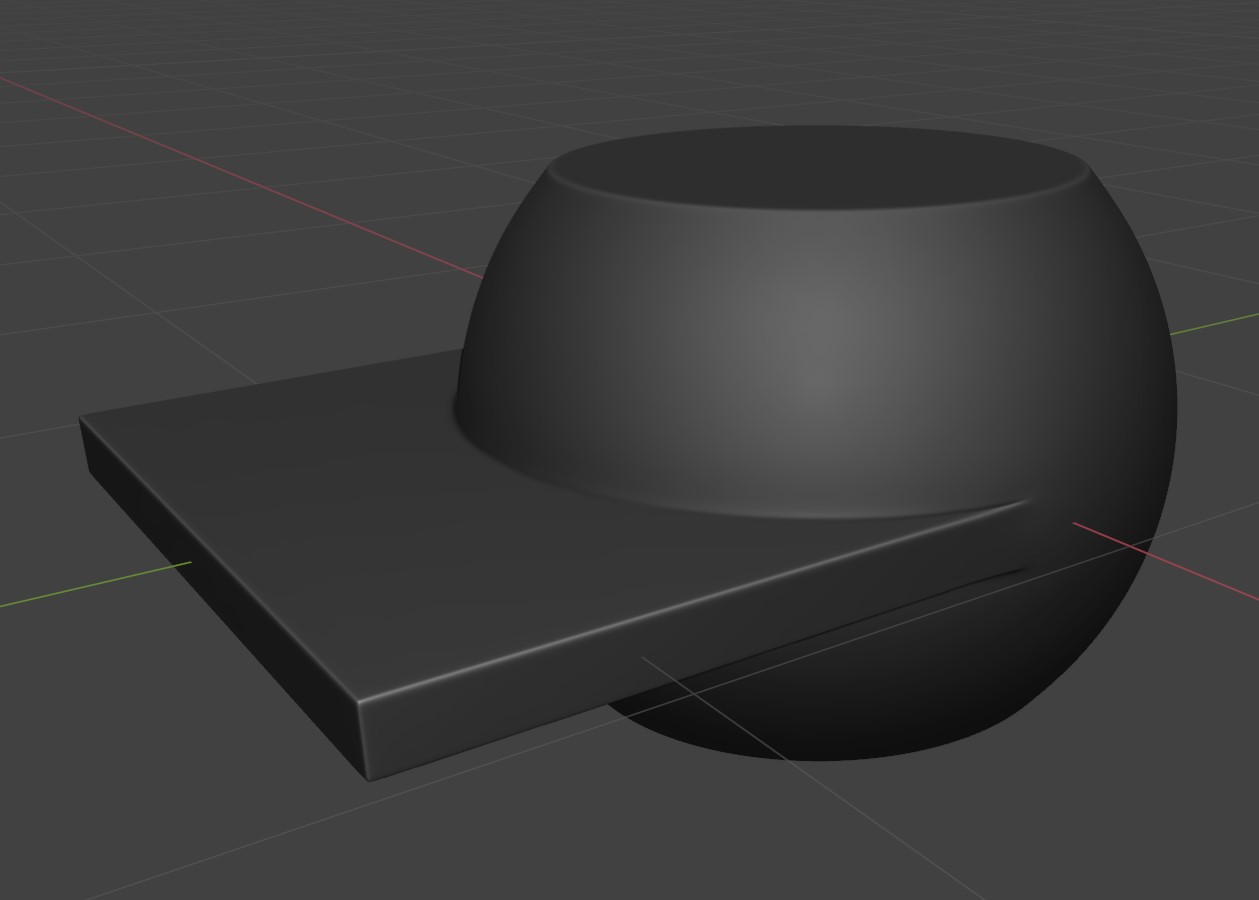

Hi @orangesky, didn't use boolean operations when creating the assets. Other than the sarcophagus (sculpted, subd modeling), It's mostly box modeled lowpoly meshes modified to have rounded/beveled edges (bevel modifiers + faceweighted normals in Blender). This shading was then baked down to the lowpoly. The benefit of this approach is that it's pretty fast. In some cases an instance of the same mesh can be reused as lowpoly (just without the modifiers). Surface details are then added during texturing.

A good case to use booleans would be when prototyping complex shapes or breaking up a mesh with insets/cutouts. To end up with a nicely shaded mesh (to bake down or to use as is) one can go a lot of routes, depends on the case. One could either process the new edges with a modifier or use autosmooth with a fitting threshold to get hard edges at the cuts. If going for a sub-d mesh, either apply the boolean operations and manually clean up the topology or rebuild a clean mesh on top of the boolean prototype. There is also the method to remesh the boolean result and polish the edges, this requires high mesh density (and high resolution input meshes for curved surfaces to avoid faceting), I see people doing this in Zbrush. That's what came to mind, I'm sure there is more ways to incorporate boolean operations in ones workflow.

I recommend to research the background of similar models to what you want to do. Maybe the artists shows some progress pics or describe their progress from which you can learn about their workflows. Check out Franky Poligons Sketchbook for some well written examples.

Good luck!

Fabi_G

Fabi_G

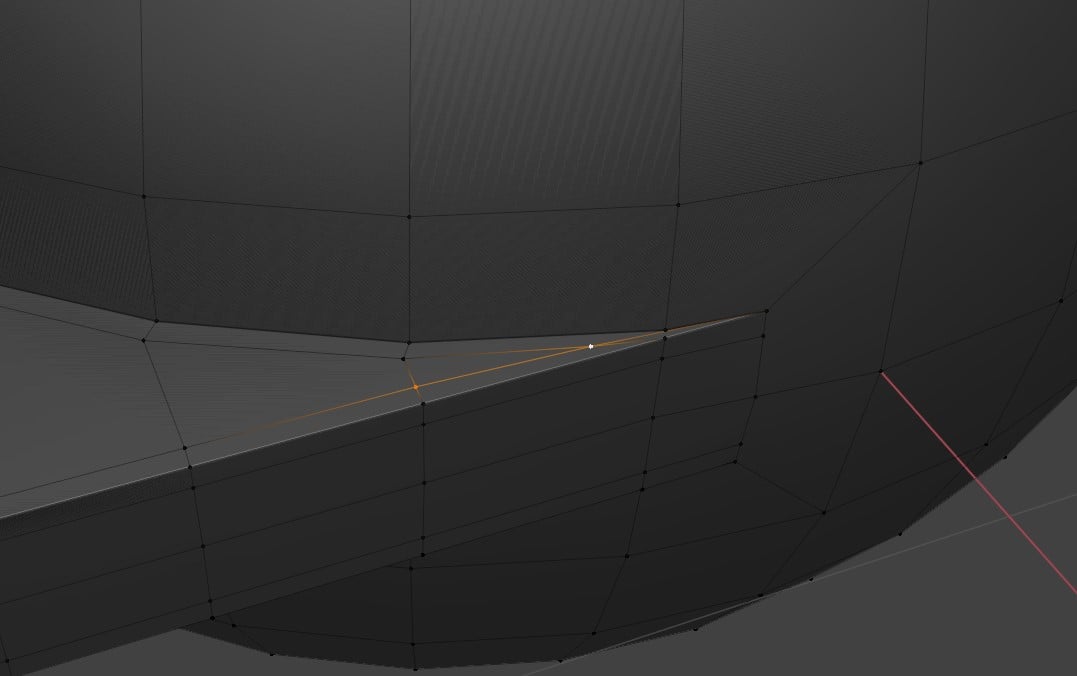

Re: How The F*#% Do I Model This? - Reply for help with specific shapes - (Post attempt before asking)

use existing geo and cut inbetween

wirrexx

wirrexx

Re: Sketchbook: thomasp

Current work in progress - this is how the sauce is made. Hooray for Blender's curves. One day of work so far.

thomasp

thomasp

Re: Normal map issue.

Did you triangulate the mesh before baking? If not, my guess is it's due to applications triangulating differently.

Best give just more information (wireframe, UVs, Normalmap) to reduce the guesswork. Ideally attach a problematic part so people can reproduce it on their end.

Fabi_G

Fabi_G

Re: Witcher Fan Art - Skelige Island

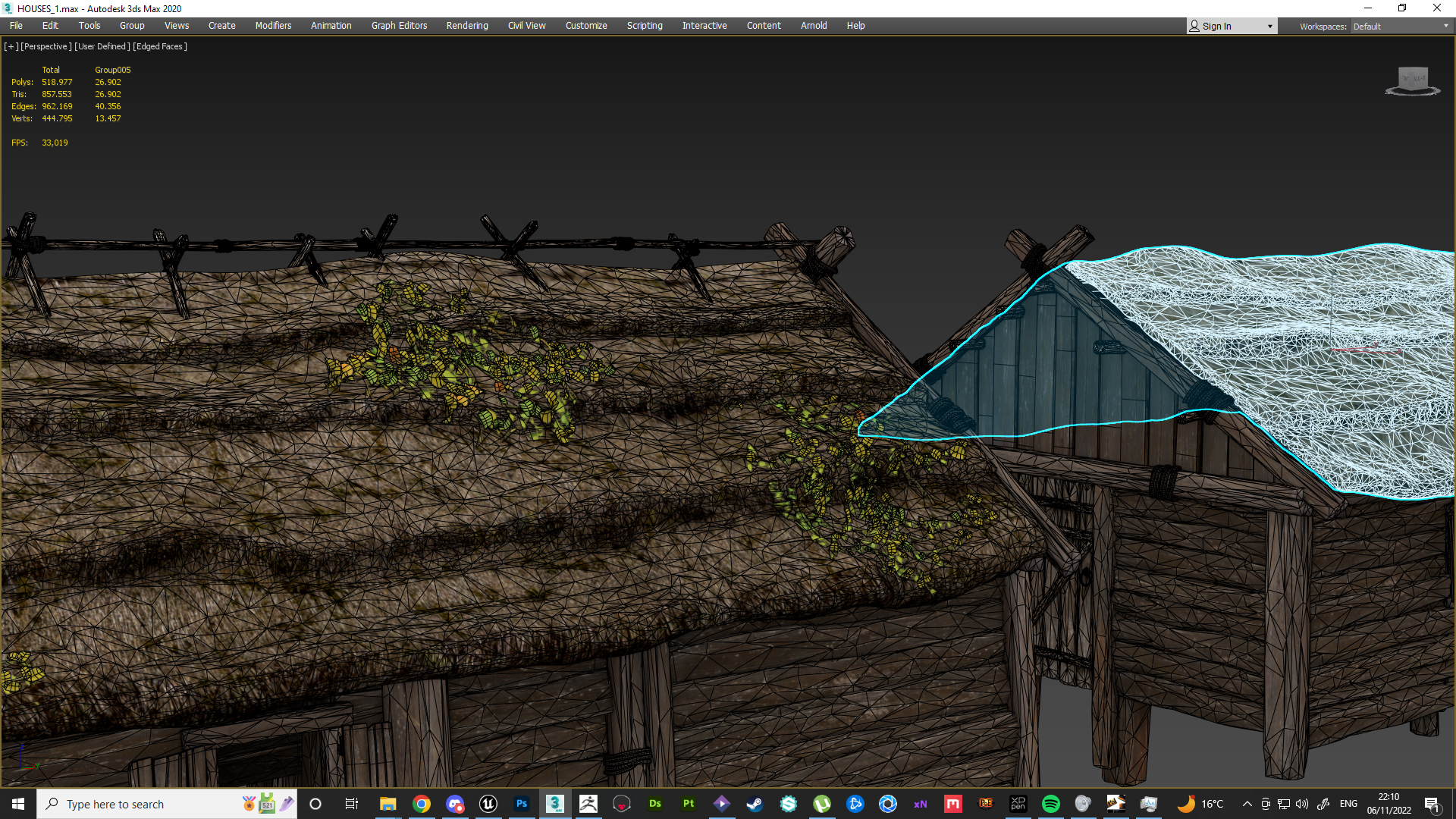

Ask and you shall recieve :D

I was looking at quixel breakdowns, propably as we all did :D But i found it very diffiicult to replicate, and extremly tidious. So i went with one mesh, and heavy displacament:

Soi first of all offcourse Zbrush. I know you can sculpt in UE5 but i love Zbrush so we go with that:

As you can see Its nothing too complicated. Id try it with cards, but i need like bio-enginner here to help me with shaders and make it as fancy as its possible.

When you use modeling tool, go to displacement> and use low value like 10-15 with heightmap. Texture for roof was also done with Photoshop, using some texturies from textures.com. Not even subtsance mate :D For such noisy texture i'd not use Substance Designer.

Hallazeall

Hallazeall