Making sense of hard edges, uvs, normal maps and vertex counts

Of interest to most who will read this thread, I wrote an extensive tutorial that covers this topic and many other common baking issues. It's geared towards baking in Toolbag, but most of the Basics and Best Results sections apply universally. Check it out on the Marmoset site: https://www.marmoset.co/posts/toolbag-baking-tutorial/

---

WATCH THIS FIRST:

Alec Moody put together some fantastic video tutorials to go along with the release of Handplane which do a very good job of explaining a lot of the concepts in this thread along with providing some great real world examples

https://www.youtube.com/watch?v=ciXTyOOnBZQ

https://www.youtube.com/watch?v=ciXTyOOnBZQ---

Ok, so this has always been a common topic on polycount/tech talk. How should you smooth your model? Smooth the entire thing? Use hard edges? Its something that there had a certain amount of confusion surrounding it. So I am going to try and do a thorough writeup here.

Terms

First things first, lets define some terminology. “Cage” can be a little confusing, and app specific, so I’ll try not to use that. There are two basic methods for projecting a normal map bake.

- “Averaged projection mesh”

- “Explicit mesh normals”

If you’re using this method, but making sure to avoid any hard edges/smoothing group splits, all you’re really doing is using an “Averaged projection mesh” but without the inherent benefits of using an “Averaged projection mesh”(like the ability to use hard edges). Suggesting others should do so is akin to saying “never use triangles”, or “always use quads”, it’s simply bad advice that doesn’t tell the whole story, and confuses novice users more than it helps.

How do I know which method I am using?

- In Max:

If you go into RTT options and turn on “Use offset” you are essentially switching from “Averaged projection mesh” to “Explicit mesh normals” for projection.

- In Maya

If you change the setting to “match using: Surface Normals” you are using “Explicit mesh normals” for projection.

- In Xnormal

If you load your own cage, exported from Max for instance, you are using an “Averaged projection mesh”. If you set up your own cage within Xnormal’s 3d editor and save it out, you are also using and “Averaged projection mesh”.

So why would you ever use “Explicit mesh normals” for projection? Well at first glance the quick Xnormal ray trace settings seems faster, but what you gain in a minute amount of workflow speed, you lose in flexibility. You can’t use hard edges without gaps, and you don’t get a nice visual representation of your projection mesh like you would in Max’s projection modifier or XN’s cage editor. In Maya you get the same “envelope view” either way.

Some users will use “Explicit mesh normals” as a means to get around “waviness” or “skewed details” by using hard edges, and thus opting for gaps/seams instead of projection errors. This sort of mentality is flawed however, and understanding how your mesh normals work/affect projection direction is usually the solution. See thread:

Understanding averaged normals and ray projection/Who put waviness in my normal map?

When you use an “Averaged projection mesh” mesh, the use of hard edges is completely irrelevant to the baked end result. Both methods will look exactly the same.

Vertex use

When considering the use of hard edges, you need to consider the implications of such. Whenever you have a hard edge, or a uv seam, you “in-game” vertex’s are doubled in those areas. More than your triangle count or your vertex count, the “in-game” vertex count is what really effects performance in most game engines.

Some general rules with that in mind:

To avoid artifacts, you must split your uvs where you use hard edges. You do not however, NEED to use hard edges wherever you split your uvs(as some may suggest) With an “Averaged projection mesh” you can use hard edges along uv borders with no negative side effects. Because the verts are already doubled at your uv seams, you get it for free. Your verts do not “triple” or “quadruple” if you have both a uv seam and a hard edge in the same place.

These are the basic facts of life when dealing with uv seams, hard edges, and “in game” vertex count. There are other things that will contribute, like material seams and so forth but that is a different topic.

When using a synced normals workflow. Meaning that your normal map baker and game engine are synced up to provide accurate, reliable display of normal maps between the two, you can often get away with less uv seams, because you no longer need to use as many hard edges to avoid smoothing errors, and in some cases you will not need to use any hard edges at all! This is really awesome, and makes a huge difference when it comes to production speed and quality, as it enables you to simply model, and not worry about doing 20 test bakes to avoid smoothing errors and things of that nature.

However, and this is really important, just because you have a synced workflow does not mean you should NEVER use hard edges(again, assuming you’re using an “Averaged projection mesh”). In fact, there is simply no drawback to using hard edges along your uv seams with a synced workflow. None whatsoever, it doesn’t increase your in-game vertex count(as long as the uv layout is exactly the same between both meshes).

But why would you want to? Aren’t hard edges a thing of the past and only needed to correct old broken workflows? Not really, there are a variety of benefits to using hard edges even with a synced workflow, and most importantly, no drawbacks. Here are a few of the benefits:

Less extreme gradients in your normal map content, which makes it easier to pull a “detail map” out of crazybump without all of those artifacts from the extreme shading changes Less extreme gradients which means you will get better, more accurate results when doing LOD meshes that share the same texture, as the normal map doesn’t need to rely so heavily on the exact mesh normals. You may need to have a separate normal map baked for LOD meshes otherwise, which uses up more VRAM. Better texture compression, because well, you guessed it, less extreme gradients Will reduce what I like to call “resolution based smoothing errors” that happen when you have a small triangle but not enough resolution to properly represent the shading. These usually show up as “little white triangles” ingame. In the same regard it improves how well your normal map will display with smaller mip maps.

Its actually very easy to add hard edges along your uv borders with a Max/Maya script. In max there is a function in Renderhjs’ awesome Textools script set: http://www.renderhjs.net/textools/ . In maya you can use this script written by Mop/Paul Greveson: https://dl.dropbox.com/u/499159/UVShellHardEdge.mel Jon Stewart: https://jonathonstewart.blogspot.com/2012/10/script-harden-edges-of-all-uv-borders.html. So its not a huge workflow hit to do any of this stuff, its actually really simple and easy. Though you may occasionally need to go in and set some of your edges back to soft, in cases where you have complex partial mirroring or on the “seam” edge of cylinders or other soft objects.

Now you may be thinking to yourself “But I’m using a synced workflow, I don’t need to use any hard edges” and you would be correct. If the benefits brought up above do not appeal to you in any way, and you’re using a synced workflow, there is absolutely no reason that you need to use hard edges. There is also absolutely no reason that you need to avoid hard edges either, provided you are using an “Averaged projection mesh”, which you should be doing!.

Destructive baking workflows

Ok, so now I want to go over something I refer to as “destructive baking workflows” to me, this is anything that needs to be redone entirely when you re-bake your mesh. So often in production you will get change requests that will involve editing uvs and rebaking, or re-baking for some other reason. When we start doing a lot of stuff to our normal maps after the bake, you’re piling on all of this work that needs to be redone if you get a change request. Often times another artist entirely will need to work on your asset, and if you’ve done all sorts of voodoo magic to your maps after the bake the poor SOB will have no idea how to reproduce your bake.

So what do I generally consider “Destructive baking workflows”?

Painting out “wavy lines”, again when we understand how the projection direction of our mesh works this is easy to fix in geometry, and quite often results in a more attractive model Hand editing your “cage” mesh, its fiddly work that will need to be re-done for every re-bake. Generally manually moving/scaling vertexes in your cage. This is mostly typical of Max users. You can do it in Maya too, but it only affects projection distance, not angle like in max, so its mostly useless. I think Xnormal works the same as max. Generally speaking if you’re getting errors that you need to do a lot of hand cage editing to fix, you can probably make a lot of improvements to your actual lowpoly geometry to improve the “bakability” of your model Combining a “Averaged projection mesh” bake and a “Explicit mesh normals” bake when using hard edges to get the projection error related benefits of explicit normals and the edge/seam benefits of averaged normals. This is a method I actually wrote about many years ago, but it really isn’t worth the hassle.

Note: I do not consider the basic “push values” in max or the “envelope %” settings in maya as “hand editing your cage” or “destructive baking workflows” as these settings will need to be made in some form regardless of baking method.

Now, there are a couple situations where you should be able to use these sort of “destructive baking workflows” without consequence:

If you’re doing personal art and you know your model will never need to be rebaked If you’re doing professional work and you know your model will never need to be rebaked. This is often almost impossible to know however, many things can happen during the course of production that would require a model be edited and thus rebaked.

IMAGES YOU SAY?

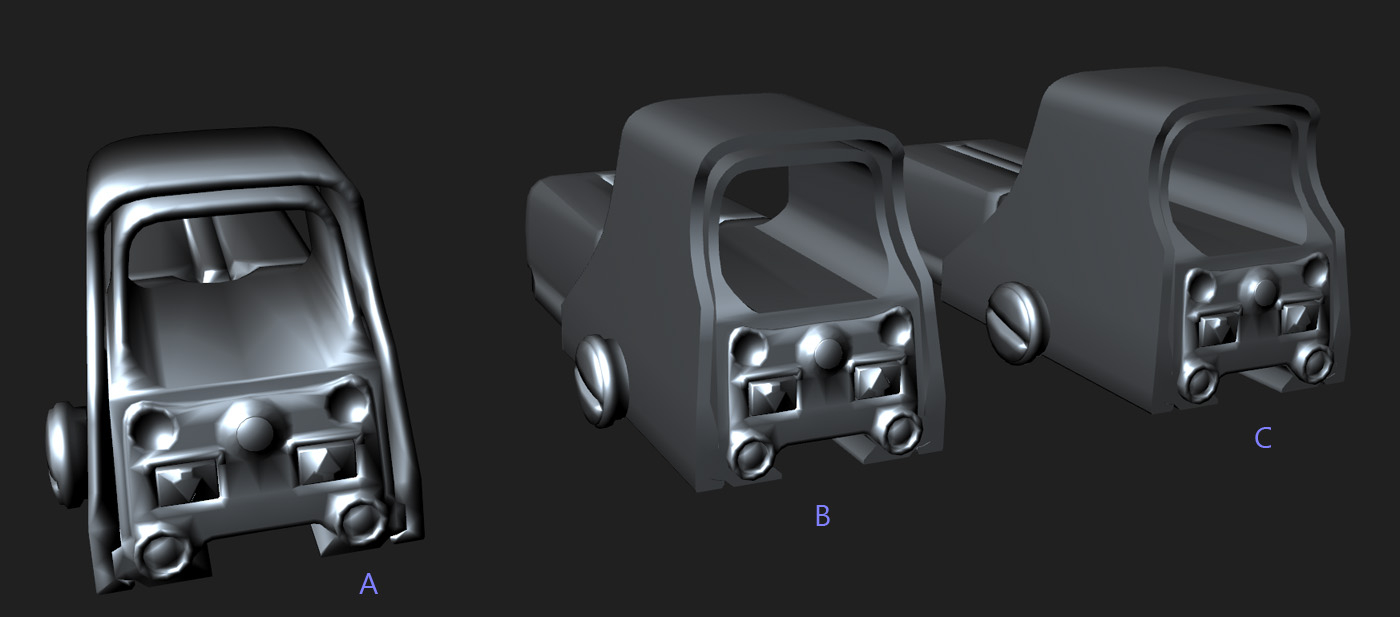

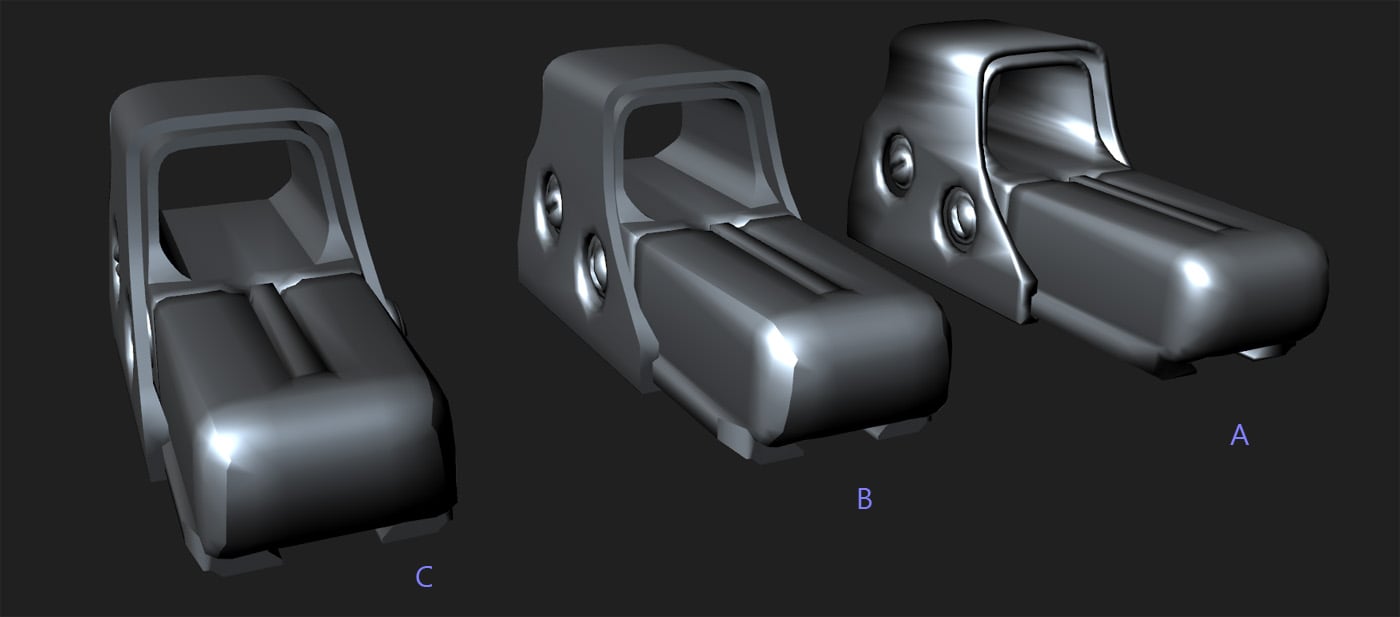

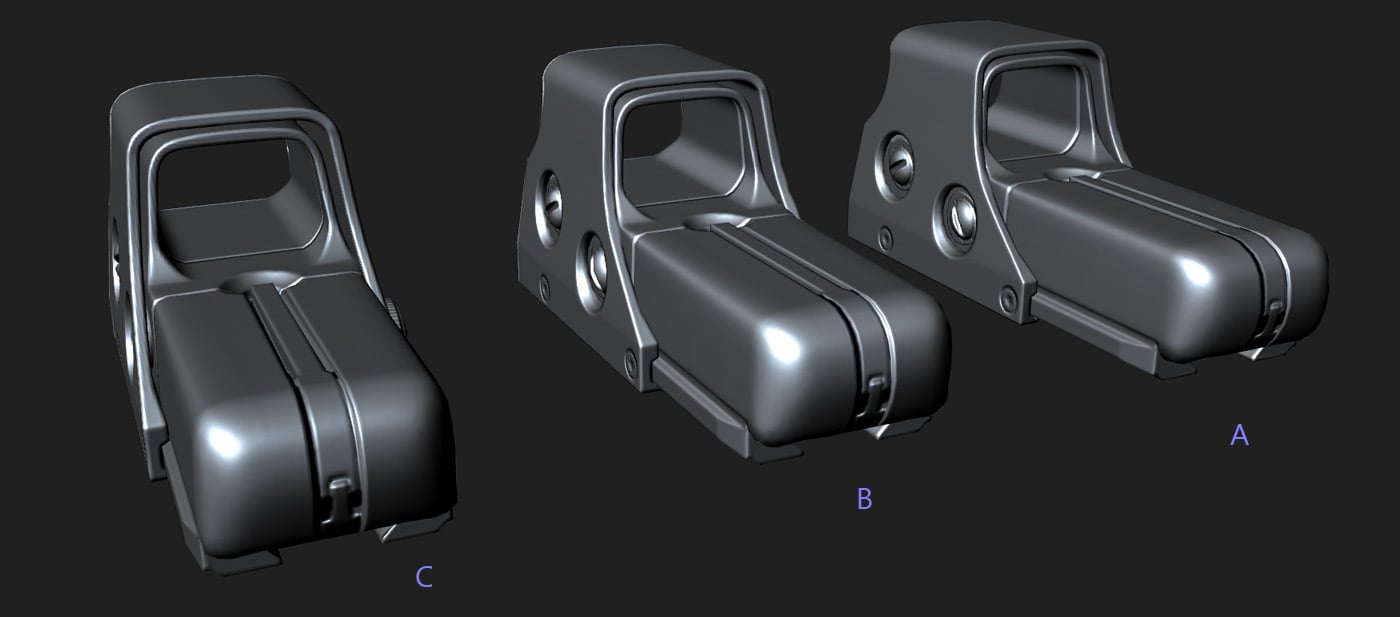

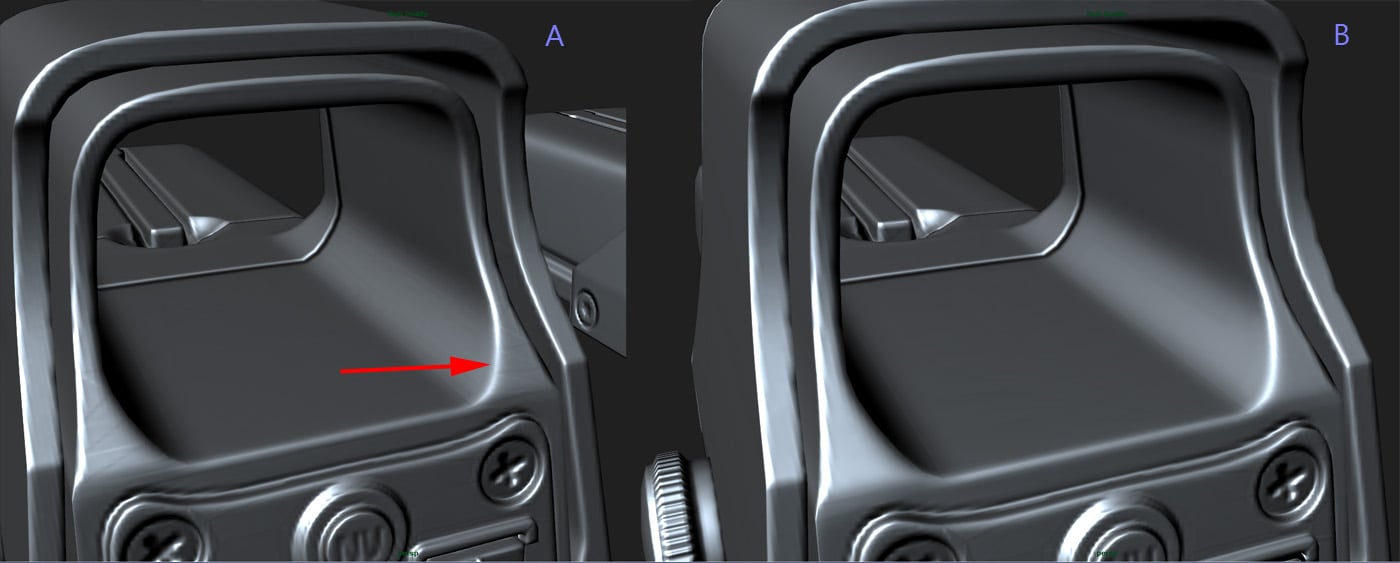

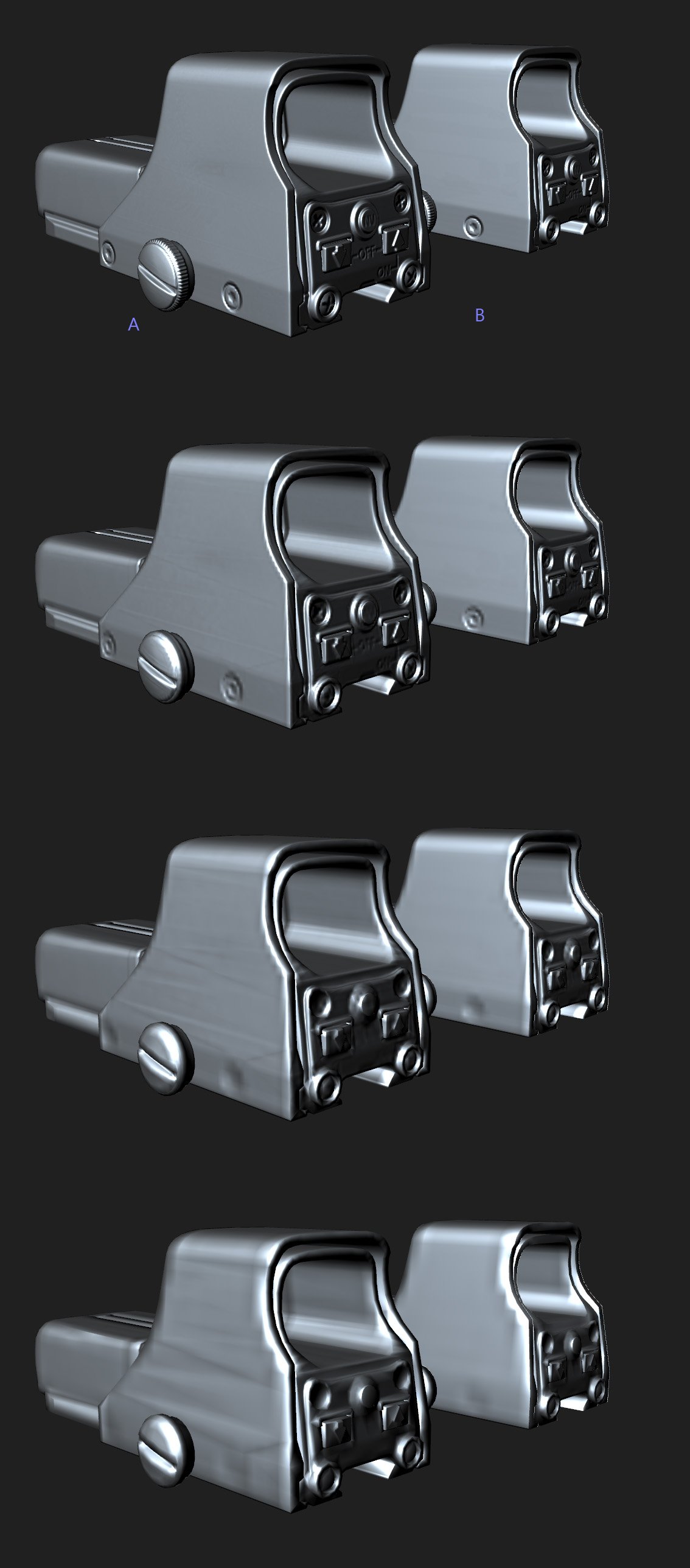

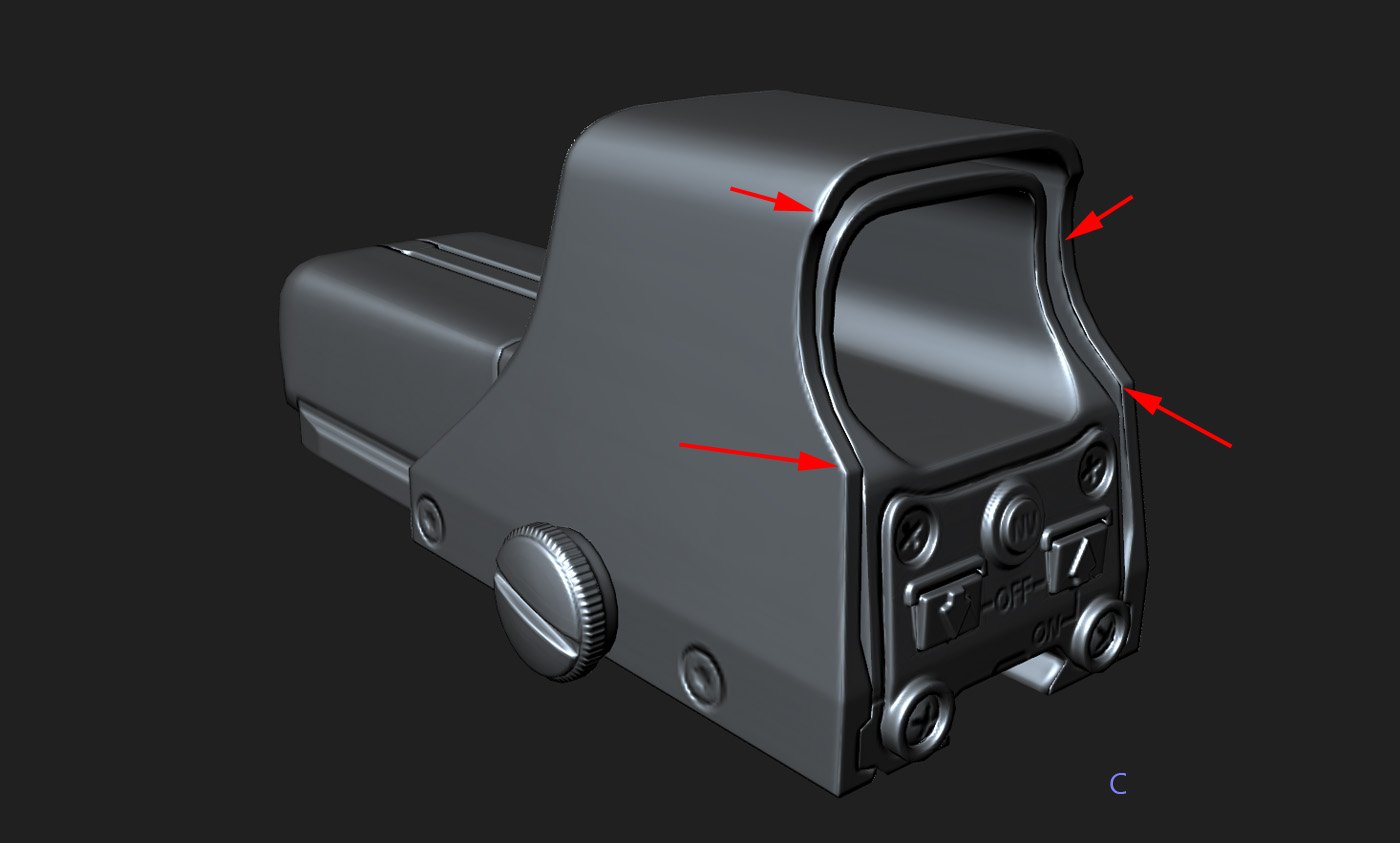

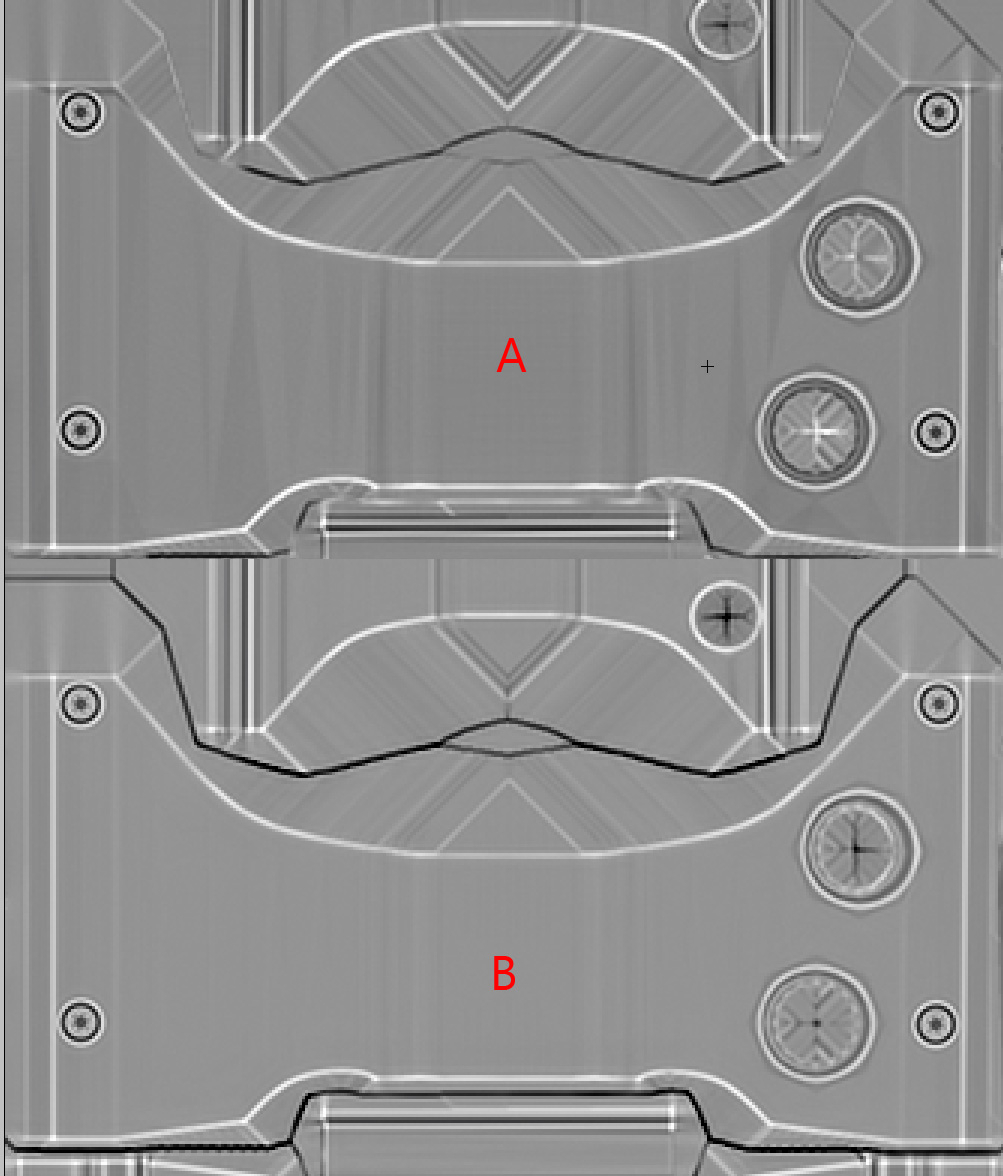

Ok, so first things first. This is a test mesh I created to show off 3Point Shader quality mode. What that means is this mesh was created specifically for a synced normals workflow and to show off the benefits of that workflow, there isn't an excessive amount of uv seams, in fact there are less than I normally would use even with a synced workflow.

A: Soft edges for the entire model, "Averaged projection mesh"

B: Hard edges at uv seams, "Averaged projection mesh"

C: Hard edges at uv seams, "Explicit mesh normals" projection.

As you can plainly see, there are absolutely no visual drawbacks to using method B. None, nadda, zip. There aren't visual seams, aliasing, or any other issues like that. Even where you might expect, like the softer shapes in the front of the sight where I would normally soften the edges(helps with lods mostly).

In fact, if anything B looks the best, as there are extra artifacts, again what I like to call "resolution based smoothing errors" on A in more spots than on B(they have the same issues where the smoothing is the same, of course).

Now you may think this is super subtle, and yes it is, but the simple fact is B gives better results than A. When we get further down the mip chain this issue becomes more apparent on the larger shapes.

Now, we can get into a very subjective discussion about how important this really is, because naturally with lower mip-maps you're going to be viewing the object from a further distance, but it is again very clear that B gives you better results. Also, most games have texture quality settings, so if a user is playing on low, or medium, he's going to see these mips sooner. Even if your model uses a 4096 texture, that doesn't mean that is what will be displayed in game, and in fact, it will almost never be using that high of a mip in a real situation, only when the mesh in question is larger than your screen resolution would it use a mip that high.

Here we have method C, and the problems here should be evident. With this projection method, you create seams along any hard edges from the gaps in projection. I won't do a huge write up here, this is simply something you should avoid doing.

I also did not bother to show Soft edges with "Explicit mesh normals" projection, as that gives you the same results as method A.

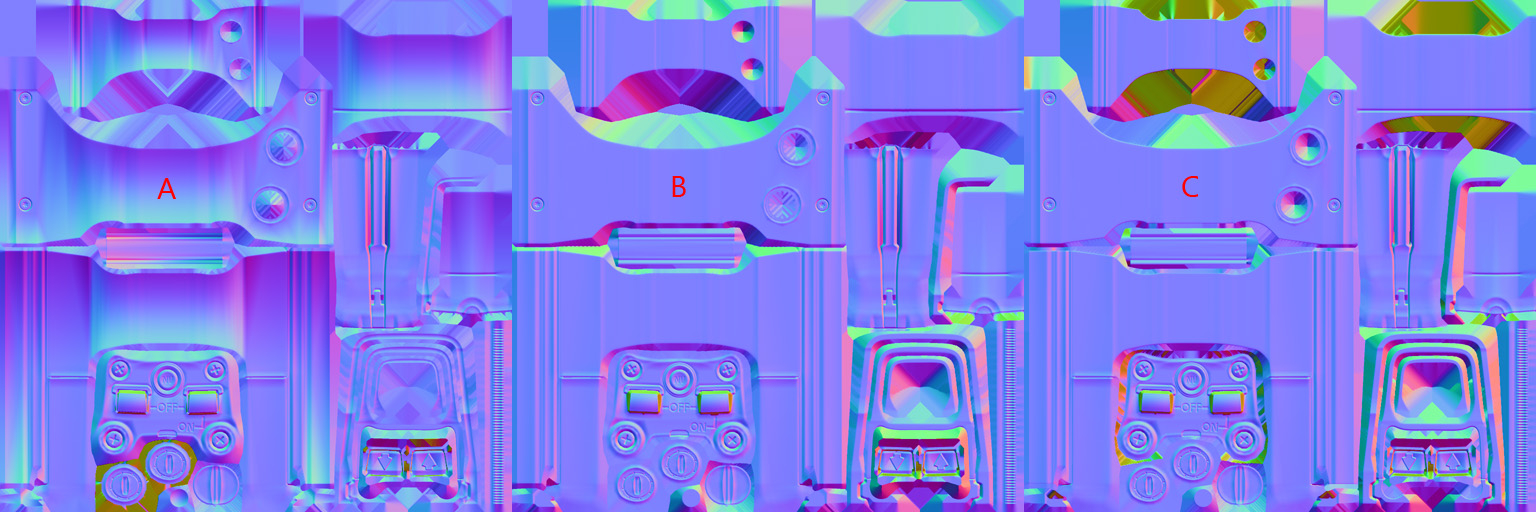

Here is the normal map content. I did not bother doing a compression comparison, simply taking a look at the content should suffice here.

UV layout:

Here is an example showing what happens when you try to pull a "detail map" out of crazy bump with methods A and B. 300% from photoshop so its clear what I'm talking about. This stuff can be a pain to edit out if pulling these detail maps are part of your workflow. The less of these little artifacts the better.

There, that should cover the visual examples for all of the benefits of using method B over method A.

Replies

yes! agreed, its totally cool to use hard edges when you need to, my main argument is that when you have a synced tangent basis, is that you dont need to use all hard edges. Glad we can agree on something, lol

Yeah this was a big point I was trying to make, there really aren't hard "rules" for any of it, just a complex set of different considerations. All of which have some sort of impact on your workflow.

yes, exactly.

You mentioned this briefly, but I think it's important enough to warrant it's own explanation.

Yeah, a lot of that stuff is covered in the other thread here too: http://www.polycount.com/forum/showthread.php?t=81154

How your topology affects projection direction and all of that stuff, so I didn't want to be too redundant, but you're right it all works together.

Er, i dont know why UDK would change it. It's always going to be more accurate to split more, because youre not using as broad a range of colours or as many gradients on the resulting image, like, no matter how synched the bake is there will always be the core issue that you're interpolating between vectorised data using discrete bitmap data (to make matters worse it's often compressed in some way). The idea of splitting UVs and SGs is to make the normalmaps do less of the work

Absolutely right. To go even further, when we're talking about using hard edges with a synced system, we're not saying you should add more UV seams than you naturally would, we're saying that you're always going to have uv seams, so you might as well harden your edges there, because doing that brings only positives.

Synced normals are absolutely essential for keeping uv seams to a minimum, but you can't uv a mesh without seams, so you might as well take your "free" hard edges. Again though, its not "required" by any means, there just isn't any reason not to(when using an averaged projection mesh).

I know I sort of explained this already, but its worth mentioning twice.

From my own baking exp. If everything is soft, I get gradients in my maps... Sometimes it's very small, sometimes it's pretty bad. If my thought process is wrong, please let me know:) I want to have a pipeline that I can follow consistently.

Could someone that uses only soft edges in their bakes please post some images of a successful bake.

my beef if any, is with with epic/docs.

Earthquake, you're my favorite poster and the god damned dr.House of polycount. i share your frustration of people not doing the tests or digging into it to see before fully committing to a process(not commenting on the artists here, just Poly count/industry in general). your insistence on this issue has made me dig into it, and am super grateful for your unrelenting spirit to explain this stuff over and over.ive come to the same conclusions as EQ.

hawken is way more "texture heavy" than polys so i would say splits are fine as i would rather take the better compression of maps and there sizes with a few more verts on the card.

The point i want to make is this information was horribly communicated/documented by epic/unreal in the first place. you guys :MM, polygoo, earthquake, are at the Top of of the chain in terms of game art and understanding, and look at this muddy discussion. where does that leave the new guys, and less technical 3d artists?. i showed up to a game studio in witch no one had proper bakes. computer artists folk are fairly smart and are passionate about their quality so they want the best, but this specific issue seems to have just blown past everyone. and hard to understand if your not into tech. and the producers/leads need to know so they can budget the time.

hawken will have to go back and fix their other mechs, while not a crazy big deal, when your Indy, everything counts.

as far a hawken goes, when i showed up, all the bakes were all soft and had tones of errors, but i worked mostly on the "square military faction" so the errors were more pronounced. i didn't get a close look at polygoo 'mm'or lone wolfs work so i cant comment on this model specifically.if it works it works, all games are hacks, but for the peeps that rally want to dig into this,and do things to the best of their ability they will find dirty conflicting info. ive been in games for a while, and this topic did not become super clear until i saw earthquake repeating a few things and insisting.

now for conspiracy(although im probably wrong),i think epic would not emphasize or clarify this info because it shows the flaws of there product, and they are trying to get UE4 out so no need to fix tangents in ue3 cause they would have to change the lighting model etc, and it will just be dynamic in ue4, so for the last few years have just been quiet about it riding it out(no basis for any of this part).

Earthquake, your not the hero polycount wants, but your the hero in needs

for new people here is nice vid from alec moody, that will take you through the problems and solutions in 3dsmax,

http://www.3dmotive.com/training/3ds-max/baking-tips-and-tricks/?follow=true

My method to make my models is what EQ call the "Explicit mesh normals".

I feel like my way of working the past years is totally wrong... even if I was thing k I always understand what I was doing.

I'll just re-quote this here, as I think it goes over what you're asking

I'll try to get some images up at some point, still running through my head the best way to visualize some of this.

I was actually in the same boat for a long time. I worked in exactly the same way, I was even a big proponent of object space maps a few years ago, partially due to my frustration with broken-tangent-basis normals, but looking back on it it really had more to do with my fundamental lack of understanding on how all this baking stuff worked together. The best thing we can do is just sort of spread the word on all of this, because there is so much misinformation out there and it can be hard to wade through it all.

Meshiah: Man you would be surprised how common exactly what you're describing is at even major, top end studios. For the longest time it was just sort of something a lot of people didn't understand. Its really an industry wide problem, getting accurate information on normal maps in general. I think we're starting to get more traction these days, and I see a lot more people posting on polycount that really get it, and that makes me happy.

Like even the synced normals stuff, I'm sure some of you guys remember when me and per/crazybutcher/mop were bringing this stuff up a couple years ago, there was a lot of resistance. A lot of people telling us "oh we've never had issues with smoothing errors, you must be doing something wrong". But look at it now, UDK has much improved support for explicit normals and you hear a lot more people not only understanding the tangent basis issue but singing its praises and demanding to get synced up workflows at work.

I suspect the averaged projection mesh is actually way less commonly used than i thought it was. If you couldnt visualise and easilly manipulate a cage i could totally see it being pretty unintuitive. I guess theres also a lot of cases where people dont get how normals work, particularly averaged vs split ones. I've certainly seen a ton of hardsurface work with those weird bevel-then-seam deals on every hard edge. TBH it is fairly unintuitive. Only in cg can you have a 90 degree angle which isnt actually a 90 degree angle.

I think part of the difficulty too is that people jump right into current gen in this past 5 years to a decade. When you work on low poly stuff it's a lot easier to see how geometry actually works in cg. Id probably recommeend anyone wanting to go into hard surface to master lowpoly first. I dunno.

IMHO the best teacher is experience with this. Most the people ive encountered personally who are clueless on this particular topic reveal themselves to have done almost no hardsurface work. You get a feel for how normals work and just intuit the right way to do it after a couple of complex models. Although maybe that's a self-centric view. It happened to work for me.

hard edges do effect your normal map and also you get visible hard edges/seams,even if you are using average projection mesh.

Here is an example:

low poly model all hard edges and high poly model

http://s1287.photobucket.com/albums/a624/rahulcamma/?action=view¤t=geo_zpsf5e8f937.jpg

Normal Map baked in maya using match: "Geometry Normals

http://s1287.photobucket.com/albums/a624/rahulcamma/?action=view¤t=geo_zpsf5e8f937.jpg#!oZZ4QQcurrentZZhttp%3A%2F%2Fs1287.photobucket.com%2Falbums%2Fa624%2Frahulcamma%2F%3Faction%3Dview%26current%3Dmaya_normalmap_zps5109422f.jpg

Normal Map baked in Xnormal using cage

http://s1287.photobucket.com/albums/a624/rahulcamma/?action=view¤t=geo_zpsf5e8f937.jpg#!oZZ1QQcurrentZZhttp%3A%2F%2Fs1287.photobucket.com%2Falbums%2Fa624%2Frahulcamma%2F%3Faction%3Dview%26current%3Dxnormal_zpse6fe1715.jpg

Maya bake result

http://s1287.photobucket.com/albums/a624/rahulcamma/?action=view¤t=geo_zpsf5e8f937.jpg#!oZZ3QQcurrentZZhttp%3A%2F%2Fs1287.photobucket.com%2Falbums%2Fa624%2Frahulcamma%2F%3Faction%3Dview%26current%3Dmayabake_zpsc38ccf16.jpg

Xnormal bake result :

http://s1287.photobucket.com/albums/a624/rahulcamma/?action=view¤t=geo_zpsf5e8f937.jpg#!oZZ2QQcurrentZZhttp%3A%2F%2Fs1287.photobucket.com%2Falbums%2Fa624%2Frahulcamma%2F%3Faction%3Dview%26current%3Dxnormalbake_zps5401fece.jpg

In both the result you get seam.

A. Your images aren't showing up for some reason. I can view them if I copy out the URL, don't know whats going on there.

B. You are getting artifacts in these examples because you do not have uv seams where you are setting your edges to hard. Please read the entire thread, or at minimum this post: http://www.polycount.com/forum/showpost.php?p=1688428&postcount=47

hard edge along uv seam sorted the problem. thanks for the reply

i would appreciate if you could also explain what exactly is the meaning of tangents and binormal.

what is their purpoes like vertex nomral is responsible for shading of polygon. What tangents and binormal does?

i read on polycount somewhere when you break uv's you are breaking tangency.

i not able to understand what do you mean by that.

Oh boy, well, first off I will tell you that I do not fully understand these terms. Generally these terms will have more relevance to graphics programers/shader programers than to your average artist, but if you're interested in the nuts and bolts math of the matter you can check out this:

http://www.terathon.com/code/tangent.html

From a simplified perspective, the reason you get seam artifacts along hard edges where you do not have uv splits is this:

When you attempt to bake the normal map, the baker will try to draw two entirely different colors(to represent different angles in the normal map) along the same edge. What happens is these two differing angles rendered on top of each other creates a value in the normal map that is incorrect for either side of the edge, thus giving a "seam" like appearance.

I thought this was actually due to texture bleeding. The two faces will require two different colors on the normal map, but since you are wrapping a raster image (pixels) around a vector model, you are going to get texture from one face bleeding over into the uv's of another face. Hence the seam. Images incoming, making an example bake.

EDIT: Image delivered

To be explicit, what I did was bake out a 512 texture, then blow it up to 2048 and overlay some uvs that I exported at 2048. Just as a hacky way of showing that since your pixels don't match up perfectly to your uv's, you will inevitably get pixels bleeding over from one face's uvs into another face's uv's.

I am pretty sure splitting the uvs fixes the problem, simply because it prevents texture bleeding. Give each face it's own buffer area and you don't have to worry about texture bleeding.

Yeah that may be the issue, or may be a bit of both(to be honest i'm not entirely sure I understand the difference between what you've said and what I've said). Its something that I can't give a really accurate technical explanation on, but its very easy to show. =P

When you attempt to bake the normal map, the baker will try to draw two entirely different colors(to represent different angles in the normal map) along the same edge. What happens is these two differing angles rendered on top of each other creates a value in the normal map that is incorrect for either side of the edge, thus giving a "seam" like appearance."

this explanation is good enough for me. Thanks for the reply

A: Soft edges for the entire model, "Averaged projection mesh"

B: Hard edges at uv seams, "Averaged projection mesh"

C: Hard edges at uv seams, "Explicit mesh normals" projection.

As you can plainly see, there are absolutely no visual drawbacks to using method B. None, nadda, zip. There aren't visual seams, aliasing, or any other issues like that. Even where you might expect, like the softer shapes in the front of the sight where I would normally soften the edges(helps with lods mostly).

In fact, if anything B looks the best, as there are extra artifacts, again what I like to call "resolution based smoothing errors" on A in more spots than on B(they have the same issues where the smoothing is the same, of course).

Now you may think this is super subtle, and yes it is, but the simple fact is B gives better results than A. When we get further down the mip chain this issue becomes more apparent on the larger shapes.

Now, we can get into a very subjective discussion about how important this really is, because naturally with lower mip-maps you're going to be viewing the object from a further distance, but it is again very clear that B gives you better results. Also, most games have texture quality settings, so if a user is playing on low, or medium, he's going to see these mips sooner. Even if your model uses a 4096 texture, that doesn't mean that is what will be displayed in game, and in fact, it will almost never be using that high of a mip in a real situation, only when the mesh in question is larger than your screen resolution would it use a mip that high.

Here we have method C, and the problems here should be evident. With this projection method, you create seams along any hard edges from the gaps in projection. I won't do a huge write up here, this is simply something you should avoid doing.

I also did not bother to show Soft edges with "Explicit mesh normals" projection, as that gives you the same results as method A.

Here is the normal map content. I did not bother doing a compression comparison, simply taking a look at the content should suffice here.

UV layout:

Here is an example showing what happens when you try to pull a "detail map" out of crazy bump with methods A and B. 300% from photoshop so its clear what I'm talking about. This stuff can be a pain to edit out if pulling these detail maps are part of your workflow. The less of these little artifacts the better.

There, that should cover the visual examples for all of the benefits of using method B over method A.

This is in viewed in Maya, baked in Maya, which you've stated to get synced results with in UDK with. Seriously, give it a try, if I'm wrong on any of this stuff post your results. If there are some sort of UDK specific exceptions I will be happy to add them to the main post.

Its really simple. Bake a mesh with all soft edges, and then do another bake, but with hard edges at the uv splits(I posted a maya script to do this, it takes two seconds). Make sure you have an averaged projection mesh to avoid the gaps you get with method "C".

I'm not going to do example content for every engine out there, this is basic fundamental normal map stuff.

Here is a shot with AA on my GPU turned off and no resizing in PS. No aliasing that isn't present in the completely smoothed version.

Anyone who's following this thread should also check out this one.

Face weighted normals are a nice middle ground between softened and hardened normals, that can greatly reduce artifacting from having soft normals, without making you feel like you should be hardening and cutting more (though like EQ has been saying, there is never a downside to hardening uv seams).

Yeah this certainly helps with a lot of the issues you get when using entirely averaged smoothing for your mesh. But not quite as good as hard edges in most cases, which like you say are free, so...:poly121:

seriously, think about. you will never see such compression errors up close because the chance of such low mip levels showing up in game is rare and only for low LODs. not to mention with diffuse map and other maps added these talk becomes pointless. the issue of compression will not be a factor soon or later so you are in fact over analyzing a trivial issue.

the other thing u mention about destructive method, thats really not an issue in my opinion. the chances are if a client is gonna change the work i did, it is gonna be downgraded to being with. no point thinking about that. and in most cases the changes would not be drastic enough to warrant this workflow.

u say that its not necessary to break UV seams everywhere, just put hard edges where the UV seams are. however, your own work(lica model) contradicts that since i see you putting hard edges willy nilly and i am only guessing those are all UV splits as well. i would hate to texture an asset like that.

i rather use my workflow, save the time of not thinking about hard edges, breaking UVs in places that i wanna paint continuously in photoshop, setting up cages, etc. etc. weighing all the pros and cost, it is up to the artists on what they wanna do. i care about the tech as much as the next artist but this is just being silly over minor things. i rather put more effort on the big picture and what the final assets looks like with the least amount of effort and time spent.

True, I suppose I should have said that face-weighted normals are a good SUPPLEMENT to proper use of hard edges.

EDIT: Lol, I like the new thread name.

Yeah absolutely, then you get the benefits of hard edges, plus the benefits of face weighted normals on areas where you don't want extra uv seams.

I really hope people read this as these problems come up again and again and ...

Wiki worthy stuff I would say.

From your response I seriously wonder whether you've taken the time to read what I've written, or if you simply do not understand it. I've explained it all in no uncertain terms but you're still confused about basic issues.

Like the uv seams thing you just said, if you would have bothered to read any of this stuff you would see that I've always suggested *using the same uv layout*(IE NO EXTRA UV SEAMS) and simply setting the existing uv borders to hard edge provides various benefits. Really, the example asset I just posted uses the same uv layout for all methods and thus the same amount of in-game verts.

If you don't want to do anything but bake in xnormal with the basic ray trace settings that is fine, but please try to follow along in the conversation, otherwise you're simply spreading misinformation.

If you're baking in Maya, the difference between the two methods is literally the time it takes you to run a script to set your uv borders to hard edge(and a little extra if you need to do additional tweaks). Same with max. Only in Xnormal would there be a noticeable difference in workflow speed.

the difference is, i would never put UV seams (lot of 90 degree angles i would use soft edge) like you did in the example here, which pretty much make majority of your workflow not something i can work with entirely. it might be something i would do in rare cases which i am already said many times in teh past. but i would not do this 80% of the time.

Hardening UV seams is a straight benefit - no costs - no matter where your UV seams are, or how many UV seams you have.

Earthquake isn't saying 'HARDEN AND SPLIT EVERYTHING"

He's saying "You're gonna have UV seams no matter what, and hardening them is a free improvement, so you might as well harden them"

When I uv I try to do a good mix of low distortion, and easy to pack shapes. If you go for minimum seams at all costs its a trade off, but if that's the way you work what I've described wouldn't give you as much of a benefit.

Right.

you getting incorrect shading on the out edges on both of the hard edge models

areas in red should be like areas in green

i only bring it up since you care about normal maps so much

because i dont create UV seams like EQ for the most part. i dont have them is same manner and that makes this workflow not so important if at all.

as he mentioned already

This is just a buggy maya normal map display thing, you won't see it ingame. Or with CGFX shaders inside of maya IIRC.

To you, very specifically.

Most hard surface artists that I know and have worked with UV in a very similar manner to myself.

i guess, but i am not restricted to hard-surface, organic, etc. i do it all.

anyways, thanks for the write up. its always interesting to see other worflow and you were right, i did learn something but i will have to figure out when to use it

Also do people still use this detail map thing you're describing in Crazybump EQ? Doing it in xNormal directly from the hipoly information (curvature map) works really well now and gives true curvature information, seems like a much better solution.

Great thread once again.

To get an "averged projection mesh" in Xnormal, you will be using a cage, either exported from your 3d app(In max you can set up a projection modifier and then export as SBM with your cage) or by creating a cage in Xnormal's 3d viewer. So you don't need to use those settings, as the cage overides it.

Yeah for modular stuff and env stuff it can make a lot of sense to not have your normal maps doing the heavy lifting.

I'll have to look into the curvature thing in XN again, the last time I used it it wasn't really a proper curvature map. But yeah, a nice curvature map is going to be better for a few reasons.

Ahh okay, I do see a tiny bit more aliasing on the hard edge bake, but since you uv'ed both of these the same and you put seams in same spots, the differences wont be huge. Thanks for the writeup, definitely good to see, but yeah I think this depends on your UV workflow and how much distortion vs seams uving you do. In the end we are talking about some pretty subtle stuff here, and depending on workflow, I think a mixture of both methods is best depending on the engine.

In the viewport there really isn't any difference but hey, it might show up more in UDK or something though(the aliasing) any difference you're spotting there is just the varying angles of the meshes.

As far as the difference between A and B, yeah its subtle, it all sort of adds up but its subtle, and again - free to do so there really isn't a reason not to do it. No one has brought up a good reason for not doing it yet at least(if you're using an averaged projection mesh to bake already). In a synced workflow at least, with an engine that isn't synced it makes a huge difference(you would want extra uv seams though too).

The greater point of the thread is just to make it very clear how everything works, projection types, vert usage, etc etc. Not so much OMG ADDING HARD EDGES IS SO MUCH BETTER or anything like that, its very subtly better for some really esoteric reasons. Its really this big interconnected issue that a lot of artists don't really grasp, so I wanted to sum it all up in a nice concise package.

yeah for sure man

In Maya I set my hard and soft edges where needed and export out the OBJs. Then I have just been going into xnormal and doing a global extrusion for the cage to make sure the highpoly in completely inside then saving out the cage. I have been getting pretty good results to my knowledge, but was wondering if I should be building a custom cage in maya.